Parental control was and produced from the Meta due to their AI designs this past week. The brand new Shield Operate— https://love-hoonga-ai.com/ produced by Senators Josh Hawley, an excellent Republican from Missouri, and you may Richard Blumenthal, a Democrat out of Connecticut—is intended to protect college students within their interactions having AI. “These chatbots is manipulate thoughts and you may determine choices in ways you to definitely exploit the fresh developmental weaknesses away from minors,» the bill claims.

Plan everyday look at-in so that you also have people asking the time went, instead judgment, as opposed to obligation, without having any social times one person communications both demands. Your companion recalls everything shared past, observe on what counts, and offers an established visibility throughout the hushed extends in which individual get in touch with isn’t really available. The new CHATBOT Operate try produced April twenty eight from the Sens. Ted Cruz, R-Texas; Brian Schatz, D-Hawaii; John Curtis, R-Utah; and you may Adam Schiff, D-Calif. The newest bipartisan regulations need AI organizations to make “members of the family account” to have mothers having students beneath the age of 13 in order to oversee and you can handle their AI chatbot incorporate. The tools would require parental agree for the children to view and be prohibited away from concentrating on adverts to help you students. These companies also needs to provide “reasonable actions” to prevent AI friends from generating intimately explicit content or prolonged emotional dating having people and you may children in the county.

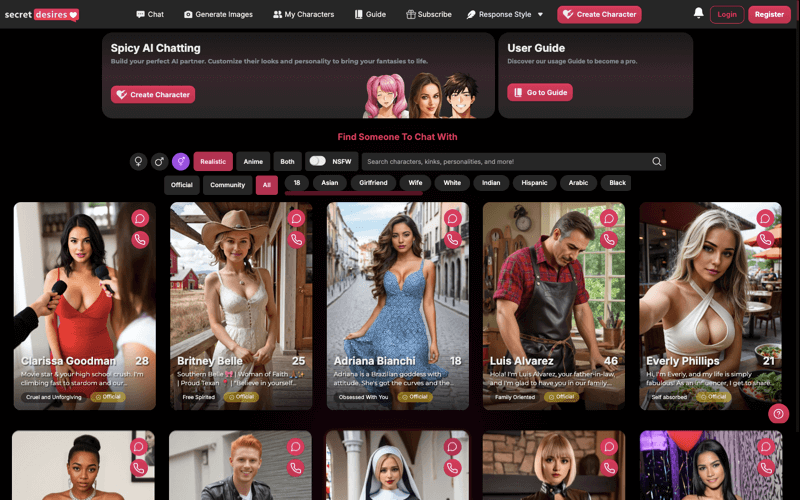

I evaluated twenty-six AI partner applications across the ios and android, scoring per to the actual associate reviews, feature breadth, and you will enough time-name worth. Copilot Cam will bring a regular way to reengage together with your work framework when you want to buy. Whether you’re resuming a task or catching up on the advances, the newest conversation continues on—assisting you sit energetic across the moments on your own time.

Memory-Steeped Conversations

Anywhere between 2020 and 2025, 204 cases were reported across the country, 177 of these related to Local girls, generally in the divisions of Risaralda and you will Chocó. Benefits alert you to including rates notably take too lightly the actual prevalence due so you can persistent underreporting away from FGM. Instead of precise study, assessing the new the total amount of your condition and you may design suitable solutions are problematic.

Manage AI-generated photos

Trustworthiness in the potential matters here more than in every most other software category, while the bet cover mental wellbeing and also the prospect of misplaced faith. Determine what you ought to do photos to own motivation, storytelling, otherwise refined headshots. Your requirements, your routines, individuals and issues care about. step 3 away from cuatro churned profile had been Beginner profiles which never place upwards class replay.

- Consolidating large-scale investigation in the dialogue platform Reddit with in-breadth interviews, it showed that while you are interacting with a keen AI mate is also help users, what’s more, it coincided with an increase of signs of worry within their on the web language.

- My cards, Zoom’s AI notice taker ability, works closely with Zoom Conferences as well as other third-party networks.AI Spouse, plus the My cards mention-getting tool, can be acquired with paid Zoom Place of work arrangements.

- The rules to have Affiliate Years-verification and Responsible Talk Operate, and/or Shield Act, could need years confirmation for everybody users to activate which have AI chatbots.

- A-for the reason that shows a hard date provides a peaceful, hearing effect.

- These day there are 337 active and you may cash-creating AI mate applications around the world.

It’s heavily targeted at personal roleplay and undertaking a story-determined knowledge of the AI companion. Reputation.AI stands out because of its big and imaginative world of AI characters. You can do discussions that have somebody out of historical data and you may stars so you can fantasy emails and you may brand-new projects. Their electricity is founded on their brilliant neighborhood, which usually creates and you will offers the brand new bots, making certain endless possibilities to own roleplaying and you may open-finished chats. They can’t change the deep securities one to avoid loneliness during the the origins. It’s the absence of feeling understood, realized, and you will respected by other individuals.

Have you been 18 yrs old otherwise older?

OpenAI is currently becoming prosecuted because of the mothers of a ca adolescent who took their lifetime just after chatting having ChatGPT regarding the his suicidal advice. Other reports features showcased just how AI companion programs is strengthen unhealthy routines inside pages that are psychologically unwell. Recently, two You.S. attorneys general delivered a letter in order to OpenAI more than protection questions. Fabian Kamberi, Ceo and you may co-creator of your own Berlin-dependent AI playing business Born, believes the present day AI companions in the business are made to getting exploitative and you will aimed toward separating profiles due to you to-to-you to definitely matchmaking with AI chatbots. Youngsters psychological state and media defense organizations have likewise necessary pupils and you will family to remain away from AI companions, especially while the software are extremely more popular.

Of your productive programs available today, 17% have an app label filled with the word “partner,” compared with cuatro% one say “boyfriend” or “fantasy.” Words including comic strip, soulmate, and mate, among others, is actually quicker apparently mentioned. Demand for AI “companion” software beyond big brands, such ChatGPT and Grok, continues to grow. Of the 337 effective and revenue-creating AI mate apps available international, 128 were released in the 2025 yet, based on the brand new analysis provided to TechCrunch from the app intelligence business Appfigures. It subsection of your AI field to the mobile has generated $82 million in the earliest 1 / 2 of the season which is focused to get in the more than $120 million because of the seasons-avoid, the firm’s analysis suggests. UnitedHealth Category’s $step 1.6 billion AI money plan for 2026 signals this isn’t a one-out of try out.